|

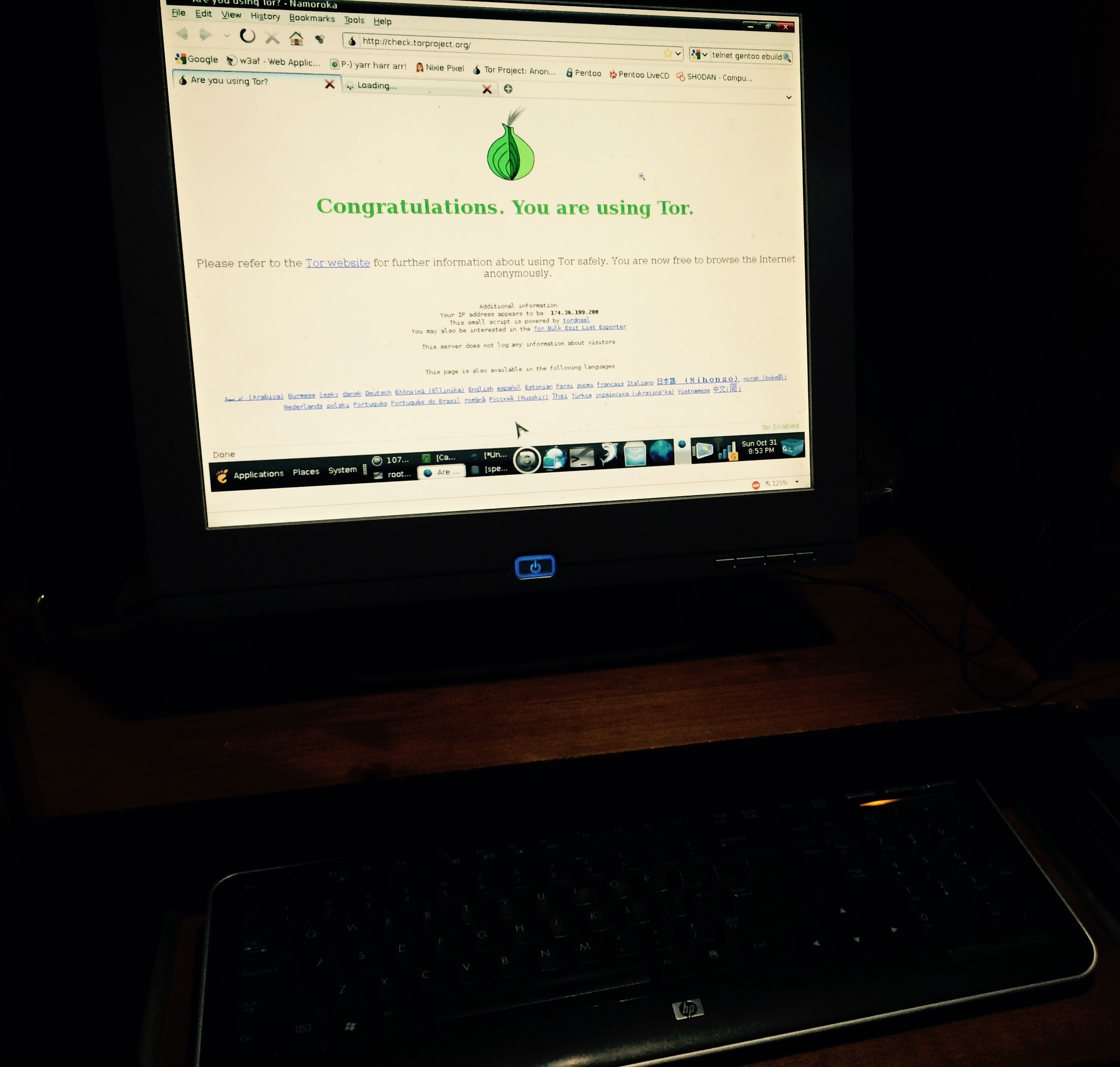

We attain a significant improvement in speed of obtaining maximum coverage, re-discover one known bug, and discover one possible new bug in a binary program during evaluation against an un-modified state-of-the-art fuzzer with no augmentation. It’s almost impossible to find a single person who’d dispute that claim. Website: This is quite literally the king of dark web browsers on the planet. This enhancement represents a step forward in a new direction toward abstraction of code that has historically presented a significant barrier to fuzzing and aims to make incremental progress by way of several ancillary dataflow analysis techniques with potential wide applicability. The first step in accessing the dark web or deep web with Tor is simply downloading the browser from the Tor Project’s website, then installing it using the. Even though these are the best deep web browsers, do not download any of them without first masking your IP address using a VPN. REFACE operates entirely on binary programs, requiring no source code or sym?bols to run, and is fuzzer-agnostic. REFACE is an end-to-end system for enhancing the capabilities of an existing fuzzer by generating variant binaries that present an easier-to-fuzz interface and expands an ongoing fuzzing campaign with minimal offline overhead. Unlike browsing the depths of the Dark Web, checking out sites on the Deep Web is just as safe as browsing any other website.

We propose a novel system to overcome this limitation by abstracting away path-constraining preconditions from a statement level to a function level by identifying impeding functions, functions that inhibit control flow from proceeding. While the Dark Web requires using a special web browser to access anonymous websites, anyone can find information on the Deep Web through a regular web browser like Chrome or Safari. Link: 7- Spokeo Best for Public Data Records Spokeo is all about the people-centric nature of deep web search. As a stochastic process, fuzzing is an inherently inefficient method for discovering bugs residing in deep logic of programs due to the compounding complexity of preconditions as paths in programs grow in length. This deep internet search engine has two billion indexed items from libraries worldwide, including links that are generally only available through a database search. Advances in fuzzing focus on various methods for enhancing the number of bugs found and reducing the time spent to find them by applying various static, dynamic, and symbolic binary analysis techniques. The best deep web search engines for beginners 1) Torch Torch has one of the largest search engines in the deep web, as they claim to have an index of more than a million hidden page results.

Fuzzing, a technique for negative testing of programs using randomly mutated or gen?erated input data, is responsible for the discovery of thousands of bugs in software from web browsers to video players.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed